The vast majority of the computing power available to the materials science community is consumed by a relatively small number of workhorse methods, such as molecular dynamics and density functional theory. These methods have been adapted to run on parallel platforms for decades, but the focus has firmly been on weak-scaling, i.e., on scaling the problem size with the number of processors. While high-performance weak-scaling implementations of these methods are extremely valuable, the focus on increasing length-scales limits opportunities for scientific discovery. Transformative impact requires the capability to leverage computing resources to simultaneously and flexibly increase length scales, time scales, and accuracy.

The vast majority of the computing power available to the materials science community is consumed by a relatively small number of workhorse methods, such as molecular dynamics and density functional theory. These methods have been adapted to run on parallel platforms for decades, but the focus has firmly been on weak-scaling, i.e., on scaling the problem size with the number of processors. While high-performance weak-scaling implementations of these methods are extremely valuable, the focus on increasing length-scales limits opportunities for scientific discovery. Transformative impact requires the capability to leverage computing resources to simultaneously and flexibly increase length scales, time scales, and accuracy.

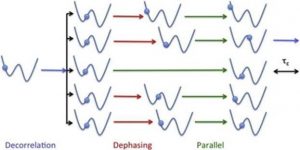

Increasing simulation timescales requires a deep understanding of the mathematics of rare in order to inform novel methods that are specially tailored from the start, as well as strong-scaling computational engines that can leverage large computational resources on problems of relatively small sizes. This requires a dramatic rethinking of how basic algorithms in materials science are derived and implemented. Similarly, the exponential increase in computer resources now enables very high accuracy simulations with methods such as coupled clusters or quantum Monte Carlo. These methods are extremely powerful, but they tend to scale poorly with the number of electrons. The development of new flexible methods where accuracy can be systematically adjusted in order to modify the tradeoff between size and time scales would therefore be extremely beneficial.

This workshop will focus on recent development of new mathematical approaches to intensive calculations at massive scale with a focus on new ways to improve scalability (both weak and strong) and extend simulations along the size, time, and accuracy axes simultaneously.

This workshop will include a poster session; a request for posters will be sent to registered participants in advance of the workshop.

Virginie Ehrlacher

(École Nationale des Ponts-et-Chaussées)

Vikram Gavini

(University of Michigan)

Danny Perez

(Los Alamos National Laboratory)

Steve Plimpton

(Sandia National Laboratories)