Explainable AI for the Sciences: Towards Novel Insights

Overview

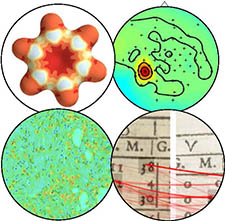

Explainable AI (XAI) has become an active subfield of machine learning. XAI aims to increase the transparency of Machine Learning (ML) models such that a model’s prediction can be better understood, evaluated, and actioned. Explanation techniques are now available for many standard machine learning models and applications – typically in the form of heatmaps that visualize which features have been most importantly influencing a model prediction for a given data point. In ML solutions based on matrix and tensor decompositions, explainability might be more direct when there is a good model match. Explainability is critical not only for the simple reason that one would like to have confidence over the solutions, but also because one would like to obtain further insights about the problem from the learned models. XAI has become essential for a variety of disciplines e.g. the Sciences, Medicine and the Humanities where ML has become an indispensable tool for data analysis and modeling. Recently, XAI has even helped to yield genuine novel scientific insights in disciplines like medicine and chemistry. Previous workshops have mainly focused on XAI tailored to specific domains. The purpose of this workshop is to explore whether there are common patterns of XAI challenges across disciplines.

Explainable AI (XAI) has become an active subfield of machine learning. XAI aims to increase the transparency of Machine Learning (ML) models such that a model’s prediction can be better understood, evaluated, and actioned. Explanation techniques are now available for many standard machine learning models and applications – typically in the form of heatmaps that visualize which features have been most importantly influencing a model prediction for a given data point. In ML solutions based on matrix and tensor decompositions, explainability might be more direct when there is a good model match. Explainability is critical not only for the simple reason that one would like to have confidence over the solutions, but also because one would like to obtain further insights about the problem from the learned models. XAI has become essential for a variety of disciplines e.g. the Sciences, Medicine and the Humanities where ML has become an indispensable tool for data analysis and modeling. Recently, XAI has even helped to yield genuine novel scientific insights in disciplines like medicine and chemistry. Previous workshops have mainly focused on XAI tailored to specific domains. The purpose of this workshop is to explore whether there are common patterns of XAI challenges across disciplines.

This workshop will include a poster session; a request for posters will be sent to registered participants in advance of the workshop.